Introduction

Whether it is the crescendo-building tune in the opening of the Star Wars saga, or the airy, delicate quality of “Hedwig’s Theme” from the Harry Potter films, there are some songs that are instantly recognizable. What is it about these songs that allows them to resonate with the human experience in such a way that they make an enduring mark in our collective cultural history and public memory? Some would argue that it is the humanistic fingerprint left on these works of art that makes them not only irreplicable and one-of-a-kind, but as exert an immeasurable societal impact. This article sets out to explore this assumption by researching literature addressing both the merits of and pitfalls of merging advances in computer science with musical production and delivery. The research question guiding these examinations asks, “Is music as an art form a fundamentally human endeavor, or should it be?”

By introducing this question and seeking to respond to it through empirical studies, this article aims to contribute to ongoing conversations regarding the role of artificial intelligence (AI) in society. Through a methodical and comprehensive examination of the academic literature on this topic, this article will argue against an either-or view of AI that presents it as wholly good or bad and instead aims to offer a more balanced understanding of both the possibilities and limitations of digital technologies (AI included) in the production of artistic content. To begin, this article describes the methodology behind this literature review, which involved using qualitative coding to generate three overarching themes from a dataset of 80 articles. The discussion section included after introduces each of these three themes: (1) digital audio workstations (DAWs), (2) coding platforms, and (3) ethical debates surrounding the use of digital technologies for music creation and delivery. It concludes by proposing the next steps forward as the fields of computer science and music become more integrated, suggesting a hybridized, collaborative model for computer-human music creation.

All of this is done to offer a more holistic view of AI in musical production and curation, one that neither attempts to perpetuate an already prevalent narrative that portrays AI as loathsome, ominous, or an outright threat. Acknowledging the possibilities that lie within AI’s applications in music, as well as its limitations, in this way is what ultimately will lead to more ethical, inclusive, and accessible innovation.

This review also draws on broader theoretical perspectives to situate the debate about AI and musical creativity. From the standpoint of creativity theory, Boden (2004) distinguishes between combinational, exploratory, and transformational creativity frameworks that help clarify whether AI systems simply remix prior patterns or meaningfully expand a musical style. Related arguments in the digital humanities emphasize that cultural production is increasingly shaped by computational infrastructures, making it necessary to rethink authorship, intentionality, and artistic agency in hybrid human–AI environments. Together, these perspectives offer a conceptual foundation for understanding how AI reshapes, not replaces, human creative practice.

Methods

To add to the scholarly conversation on the integration of AI and music production, Google Scholar and the Association for Computing Machinery (ACM) databases were used to conduct a comprehensive literature review of this topic. Google Scholar was selected due to the breadth of its coverage, and the fact that it includes many interdisciplinary articles not strictly related to computer science. The ACM Digital Library, on the other hand, was selected as a complementary database because of the sheer number of peer-reviewed publications specifically related to computer science publications it hosts. This approach encompassing both more general studies of computer science and digital music as well as more specific ones helped to ensure that the scope of this review was both broad and sufficiently focused.

To create the dataset for this review, the following search terms were used to browse these databases: AI and music, music production and artificial intelligence, computer-generated music, digital audio workstation, live coding, algorithmic composition, and machine learning in music. The results were not filtered by year to combine current perspectives with a historical overview of the development and innovations in this field. Additionally, because computer science is considered a very applied field, no filters were imposed on publication type; this way, trade publications and non-academic sources could be included as well. To lean into the applied focus of the field, discussions were excluded that were solely theoretical in nature. Articles written in a language other than English were also not included in the dataset. Since the data for this review came from existing publications, there were no concerns for ethical research conduct.

Results

This search resulted in a total of 80 eligible sources, consisting of peer-reviewed journal articles, books, conference proceedings, and technical or industry reports. To ensure that the sample was not skewed towards one field of study or another, it was evenly split between Google Scholar (n= 40) and the ACM Digital Library (n=40). Qualitative coding techniques, such as those outlined by Creswell and Poth (2016) and Saldana (2014), were used to code the data (i.e., the different sources). These initial codes, which functioned to sort and label the data, included: music software, AI music recommendations, coding music, AI music productions, bias in tech, access and cost, and creativity. These codes were then organized into an Excel spreadsheet, which helped identify key themes across the data. The key themes represented in the sources included in the dataset were as follows:

Generative AI for Music Composition

This theme was present in articles discussing how machine learning models generate original compositions. Because of how broad this theme was, it was then divided into two subthemes. Subtheme 1 included literature focusing on digital audio workstations (DAWs). The second subtheme, Subtheme 2, focused on contemporary coding platforms.

Algorithmic Recommendations

This theme encompassed literature focused on how AI is used to suggest songs to users and cultivate specific listening preferences and habits.

Ethical Implications

This theme combined the two subthemes of (1) accessibility concerns and (2) digital bias. It includes literature that explores the potential of AI in digital music creation as it offers possibilities for making the world a more just place, while at the same time acknowledging that it can also contribute to certain injustices.

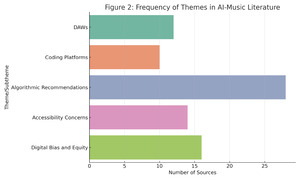

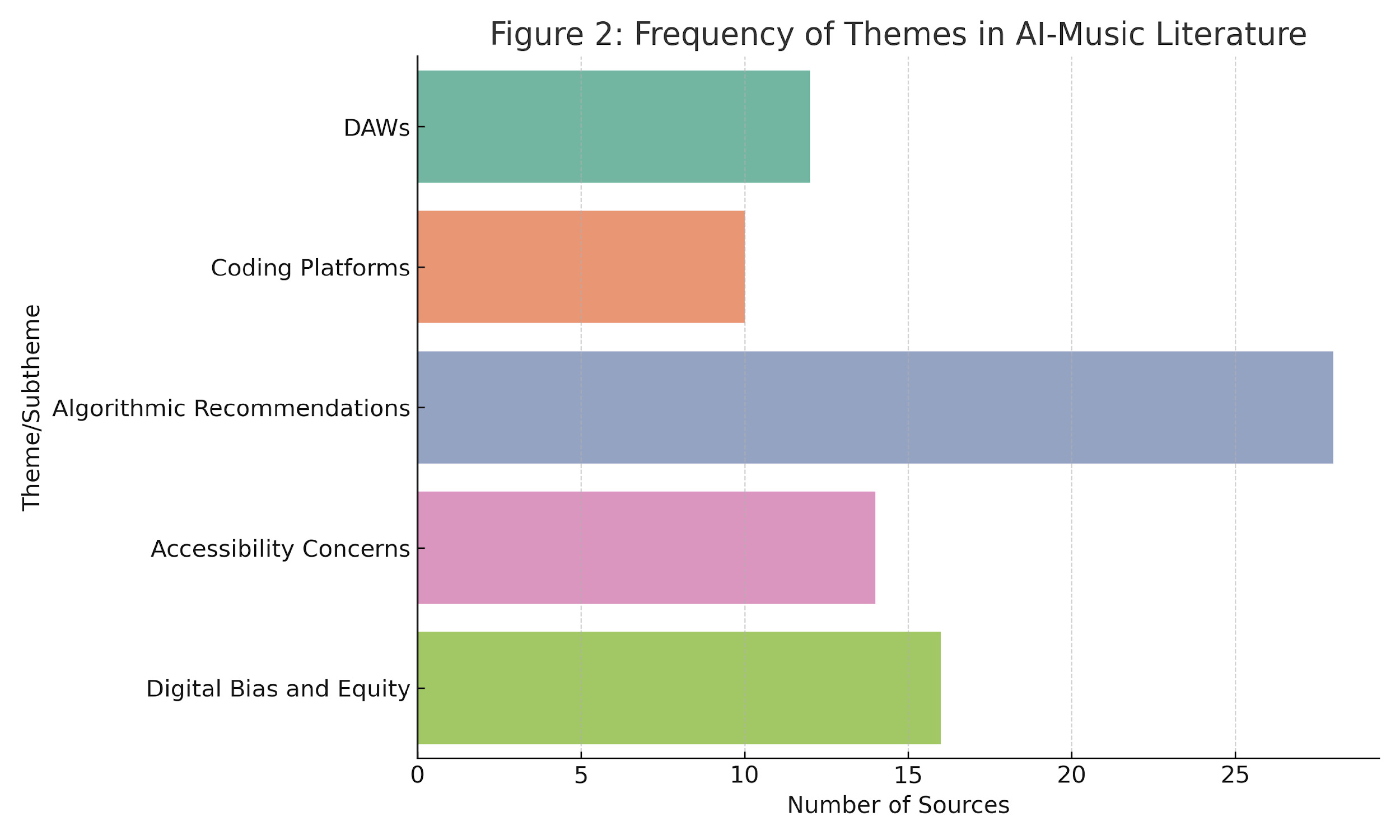

In cases where a source addressed multiple themes, it was counted towards each relevant category. Sources that appeared in both databases were only counted once, ensuring that all duplicates were excluded. Sources that did not neatly fit into these themes were categorized as “Other,” and are not included in the discussion presented here. The frequency of each theme within the dataset is displayed in Figure 1.

Figure 1 illustrates not only the distribution of themes across the 80 selected sources but also reveals which areas dominate contemporary AI-music scholarship. The most frequently occurring theme is Algorithmic Recommendations, appearing in nearly 30 sources. This concentration suggests that current research is heavily invested in understanding how AI shapes listening behavior, influences user preferences, and mediates power relations between platforms and listeners. In contrast, Generative AI for Music Composition is split across two subthemes, DAWs and coding platforms, with each receiving moderate but comparable attention. This indicates that scholars view AI-assisted creativity as multidimensional, spanning both consumer-facing music production tools and more technical, code-based environments. The theme Ethical Implications (accessibility concerns + digital bias) appears less frequently than algorithmic recommendations but still occupies a substantial portion of the dataset. This distribution highlights that while ethical concerns are present in the field, they are often treated as secondary to technical or user-experience questions. Taken together, the figure demonstrates that although the field is expanding in multiple directions, scholarly attention is unevenly distributed, with recommendation algorithms receiving the most sustained focus.

Discussion

Generative AI for Music Composition

While the integration of computers and music may seem like a recent invention, the truth is that the two fields have been in collaboration with one another for several decades. In fact, as far back as 1951, Australia’s first digital computer, named Commonwealth Scientific and Industrial Research Automation Computer (CSIRAC), was the first computer to generate music (Doornbusch, 2004). A few years later, in 1957, the fields of music and computer science saw further integration with the emergence of the first computer to use algorithms for musical production, when the University of Illinois’ Lejaren Hiller and Leonard Isaacson created the “Illiac Suite.” This represented the first musical score to have been composed by a computer using a predefined set of rules (i.e., algorithms) (Funk, 2018).

The development of AI-assisted music began with an early experimentation phase from 1951 to 1957, marked by foundational advances in computer-generated music and algorithmic composition. This was followed by a long period of relatively limited mainstream innovation from 1957 to 2000. The digital production era then emerged between 2000 and 2014, characterized by the rise of digital audio workstations (DAWs) and live coding tools. Finally, from around 2016 to the present, the field has experienced rapid growth during a period of modern AI acceleration, driven by advances in generative AI and music technologies.

The development of AI-assisted music within a longer historical trajectory illustrates that what is popularly understood as a “recent” innovation is, in fact, the continuation of a seventy-year process. The earliest milestones—CSIRAC in 1951 and the Illiac Suite in 1957, appear at the far left of the timeline, emphasizing how the foundations of algorithmic composition emerged well before the modern digital era. The long period of relative quiet that follows highlights an important pattern: early experiments in computer-generated music were technologically significant, yet adoption was slow due to limited computational power and the absence of accessible music interfaces. This gap contrasts sharply with the cluster of innovations near the right side of the timeline, where the rise of online recommendations (e.g., Spotify in 2006) and the release of contemporary AI-music tools (e.g., OpenAI’s MuseNet, Magenta, and AIVA) demonstrate a rapid acceleration in both research and commercialization. The compression of major developments after 2010 reveals a field that has shifted from experimental to mainstream, aligning with the broader expansion of machine learning across creative industries. Taken together, the timeline helps contextualize Subtheme 1 by showing that today’s DAWs are not isolated inventions but the culmination of decades of iterative experimentation in algorithmic and computational music-making. Now, with such advances, an entire orchestra of strings, woodwinds, percussion, and brass is offered within the confines of a digital audio workstation (DAW)—the focus of Subtheme 1.

Digital Audio Workstations (DAWs)

A digital audio workstation (DAW) is “software designed to enable multitrack audio and MIDI recording, editing and mixing on your computer” (Duggal, 2024). DAWs appeared frequently in the reviewed sources (n = 24), with Ableton Live (Zala, 2018), FL Studio (Reuter, 2022), and Logic Pro (Huang & Wu, 2016) among the most commonly referenced. These programs rely on coded systems to support recording, layering, sound manipulation, and the use of virtual instruments triggered by MIDI instructions. Across these sources, scholars often distinguished between event-driven and procedural approaches to computational composition (Roberts & Wakefield, 2016). Event-driven systems respond to user actions, such as key presses, and treat the human performer as the primary initiator of sonic change (Schipor & Vatavu, 2023). In contrast, procedural systems rely on predefined rules derived from music theory or algorithmic structures to generate or transform musical materials without direct user input (Anders & Miranda, 2011). Research on video-game scoring, for example, shows how procedural rules create adaptive soundscapes that shift in response to gameplay dynamics (Kaushik, 2025). Together, these approaches demonstrate that DAWs operate at the intersection of human-driven interaction and automated rule-based generation, highlighting the hybrid nature of contemporary digital composition.

Coding Platforms

Building on the idea of rule-based and algorithmic structures, the reviewed literature also discussed coding platforms as a distinct mode of AI-assisted music creation. Unlike DAWs, which rely on graphical interfaces and menu-based actions, coding platforms require users to write code that directly specifies musical behavior. Code determines what notes will play, how rhythms evolve, and which effects are applied, offering a more granular and programmable approach to composition. Two platforms appeared most frequently in the dataset: Sonic Pi and TidalCycles. Sonic Pi allows users to compose by writing commands in Ruby, using loops, functions, and real-time modifications to shape the music. Researchers describe this workflow as “a sequence of statements,” where altering the structure of code reshapes musical outcomes (Aaron et al., 2016). TidalCycles, built in Haskell, is especially prominent in live-coding performance communities, where performers continually update code to manipulate music in real time (TIDAL, 2014). Together, these platforms illustrate how algorithmic thinking has become a creative medium in itself, allowing musicians to engage compositionally with the logic of computation.

Algorithmic Recommendations

Another large subset of the dataset touched on AI for algorithmic music recommendations. These articles addressed the capacity of AI to use deep learning to tailor new music offerings to users. These papers present AI as using deep learning, a process where AI is fed large amounts of data to start to identify patterns and key characteristics in that data (Goodfellow et al., 2016)—to detect user preferences and recommend new music based on those preferences. Some of the more commonly referenced platforms that harness the power of AI to suggest new songs and deliver personalized playlists include Spotify, YouTube Music, and Apple Music. But within the literature, digital technologies like AI are presented as doing much more than just recommending music; AI can also use algorithms to create music. Open AI’s MuseNet, Google’s Magenta program, and AIVA were all platforms that were introduced for using AI-driven digital technologies to compose songs, or parts of songs like melodies, according to a specific style or genre using neural networks that learn by example. A spectrum of human-computer collaboration is presented below.

Human–AI Music Collaboration Spectrum

-

Traditional: Music composition (human-only) — fully human-created music

-

Hybrid: Systems (DAWs, coding platforms) — human + technology collaboration

-

Automated: AI compositions (e.g., MusicLM, AIVA) — fully AI-generated music

In their article, “Music Generation by Deep Learning—Challenges and Directions,” Briot and Pachet explore how deep learning allows AI to imitate styles of other songs to generate its own sequences of music (Briot & Pachet, 2020). This imitation, however, represents one of the greatest critiques of computer-assisted music production: a lack of creativity (Mycka & Mańdziuk, 2024). I argue that in training the AI on preexisting musical data, the result may come across as unoriginal. To address this issue, they suggest a system that invites greater user control and customization. The new system the envisioned would be much more interactive, which would, ideally, avoid some of the pitfalls of AI in music production, such as the lack of creativity mentioned previously.

Fiebrink and Caramiaux (2018) argue for something similar. In looking at how machine learning algorithms can be used to create music, the researchers assert that these tools would be more appropriately regarded as partners in the creative music-making process. By emphasizing the need for user-centered design that is responsive to user input, human-computer interactions can lead to more creative collaborations between musicians and these machines. Machine learning systems should be adaptable and flexible, in addition to collaborative. They should respond to and account for different musical contexts, as well as the objectives of the musician/user, instead of forcing the musical production to fit predefined workflows.

Counterarguments: AI Cannot Replicate Human Emotional Expression

While many scholars approach AI as a promising collaborator, a substantial body of work argues more strongly that AI systems cannot replicate the emotional, embodied, and contextual dimensions of human musical expression. Scholars in music cognition note that emotional nuance in performance is closely tied to lived experience, bodily intention, and socio-cultural meaning, dimensions that machine-learning models cannot internalize (Jackendoff, 2009). Similarly, Davies (2011) contends that musical expression is fundamentally grounded in human emotional agency, and that AI systems, which operate through pattern replication, can only simulate emotional tone rather than experience or generate it. These critiques push the discussion beyond questions of stylistic imitation: they challenge whether computational systems can ever meaningfully participate in aesthetic communication. Including these perspectives reveals a deeper tension in the literature between those who treat AI as a creative partner and those who see it as a fundamentally limited imitator, unable to access the human emotional substrate that gives music its expressive force.

Ethical Implications

Accessibility Concerns

The third theme that appeared across the literature took on an ethical component. Many researchers, such as Pedrini et al., acknowledge that several of the DAWs and coding platforms mentioned above make musical production technologies accessible by offering users an affordable solution that does not require expensive equipment. In fact, with these digital technologies, an aspiring artist can create the sounds produced by an entire orchestra for the price of a laptop and DAW subscription (which ranges from anywhere from $99 to $800). Therefore, whereas previously, musical production was only open to people with extensive financial means, now, with innovations in digital technologies, users of various income levels can participate in music production.

The articles included in this dataset also considered accessibility in terms of users’ abilities. People with various mobility issues may experience difficulty in playing the piano or strumming a guitar. However, some scholars argue that the technologies described in this article make musical production accessible to these individuals, as all they have to do is click a button or press a mouse. It is worth pointing out, though, that these technologies may not be user-friendly for all abilities. Pedrini et al. (2020) noted that DAWs such as AvidPro tools, Cockos, and REAPER may present accessibility concerns for blind and visually impaired people.

The theme of ethical debates related to the accessibility of these technologies also included a concern for the technology gap, often referred to as the “Digital Divide.” While more places throughout the globe are gaining access to technology through improved infrastructure, many places are still without reliable internet services. Additionally, the cost of software and hardware may still be too high for some. That is why scholars like Yfantis et al. (2010) argue that digital musical production is still subject to accessibility concerns in terms of costs and technology access.

Digital Equity and Bias

Finally, the literature also examined cultural biases embedded in algorithm-based recommendation systems. Seaver’s ethnography of recommendation engineers shows how these systems subtly reproduce North American listening norms, shaping what users perceive as “good” or “relevant” music (Seaver, 2022). Similarly, Kowald et al. (2020) found that algorithmic recommendations tend to amplify already-popular tracks, which risks marginalizing fewer mainstream genres, artists outside the West, and musicians from underrepresented communities. Some scholars point to more socially grounded recommendation models as a corrective. Furini and Fragnelli (2024), for example, argue that drawing on a user’s social network can diversify recommendations and reduce the reproduction of mainstream cultural hierarchies. These debates illustrate that while AI systems can expand musical access, they may also reinforce inequalities unless intentionally designed to counter dominant cultural biases.

Authorship, Ownership, and Intellectual Property

A growing body of scholarship highlights another major ethical dimension in AI-generated music: the problem of authorship, ownership, and intellectual property. As generative systems become more capable of producing complex musical compositions, scholars question who should be recognized as the “author” of an AI-assisted work: the human operator, the model’s developers, or the artists whose music was used in training datasets. This debate reflects broader uncertainties in copyright law, which traditionally defines authorship in terms of human creativity and intention. Ownership concerns also arise because many AI-music models are trained on large corpora of copyrighted recordings that artists did not explicitly consent to share. Researchers argue that this creates a form of “derivative labor,” where the creative contributions of musicians become raw material for model development without compensation or acknowledgment. In the context of music production, this means that AI-generated compositions may unintentionally reproduce stylistic elements from copyrighted works, raising questions about infringement and fair use. Several studies emphasize that these issues are not merely legal but ethical. If AI systems rely on the uncredited labor of artists, particularly those from marginalized communities, the resulting music risks perpetuating existing inequities in the cultural economy (Elish & boyd, 2018). As a result, scholars increasingly call for clearer guidelines on dataset transparency, consent, and attribution to ensure that AI-generated music respects the intellectual and creative rights of human artists.

Conclusion: Towards a Collaborative Computing Model

In summary, this review of the literature on digital music production presented several key themes characterizing leading research in the fields of music and computer science. The first theme that came up focused on digital audio workstations (DAWs), the second on algorithm-based recommendations for music delivery, and the third on ethical debates within the realm of digital music production. Each of these themes offer key takeaways worthy of further consideration as musicians and computer scientists aim to create future interdisciplinary collaborations. In fact, greater collaboration between humans and computers was identified as a need by several of the authors featured in this dataset. These scholars recommended refining the design of DAWs and coding platforms to make them more interactive and less constrained by predefined workflows. By giving users more freedom in their productions, these collaborative elements could also result in greater creativity in the content they generate, which would alleviate concerns about AI-assisted musical production lacking creativity.

Accessibility and inclusivity were also important points raised in the literature. Several scholars noted that computer-assisted musical production allowed for people of all abilities and income levels to participate, whereas others pointed out that users with visual impairments might be excluded, as well as those who live in areas without adequate technological infrastructure. Researchers also highlighted the biases that exist within AI-informed recommendation systems that can push users to listen to music that reflects mainstream media and Western culture. These systems can therefore exclude emerging or less popular artists, and those who come from cultures outside of North America and Europe.

As findings from this literature review suggest, the use of digital technology for musical composition and delivery represents a double-edged sword. Since computer science is a relatively young field, challenges and pitfalls are to be expected. However, in seeking to bring greater awareness to both the promise and perils of digital technologies when used for music, this review may pave the way for more interactive hybrid systems that capitalize on the strengths of digital technologies and integrate them with human creativity. Future innovations in this field would therefore not be entirely manmade or completely machine but combine the best of both.